Employee intake for broken internal AI tools

AI problems don’t start as incidents.

They start as small moments of lost trust.

A humane intake layer for employees using internal AI tools — so teams can see what’s broken before it becomes costly.

When AI gets in the way of work, people need somewhere to put that frustration. This is where employees say what’s wrong with AI — in their own words.

Where AI fails quietly

AI rarely breaks all at once. It slips. It confuses. It creates extra work. People stop trusting it — long before anyone files a ticket.

By the time issues show up as incidents, the signal is already old.

From incidents to early signals

erode treats employee complaints as signals — not failures. It captures moments of frustration as they happen and helps teams see where internal AI is getting in the way of work.

Not to monitor. Not to judge. But to understand what to fix next.

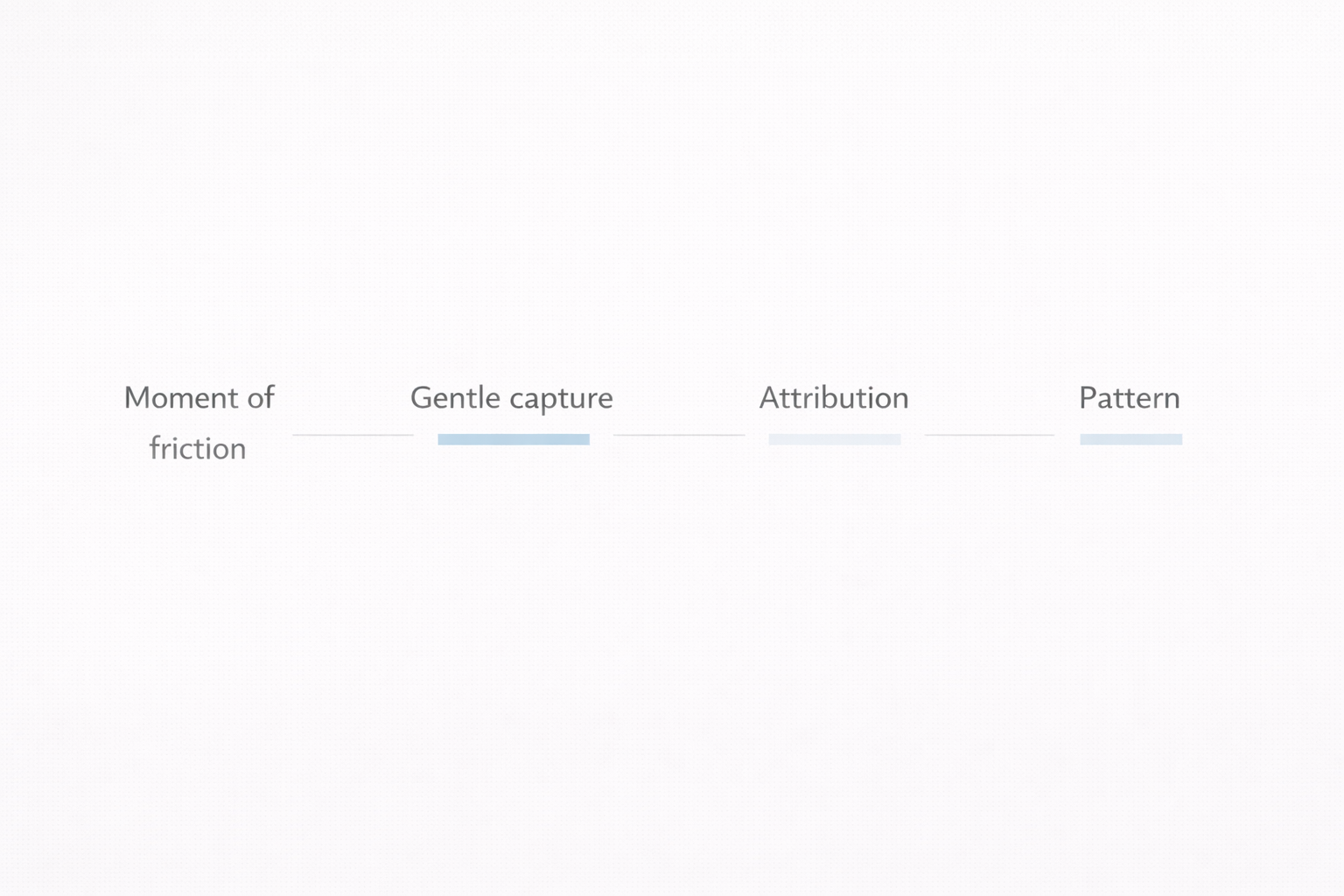

How trust signals become insight

Step 1

An employee complains

Someone hits a broken AI tool or workflow and says what’s wrong.

Step 2

It’s captured gently

A calm Slack interaction records context without forms, tickets, or retrospectives.

Step 3

The system structures it

The complaint is attributed to a workflow and model automatically.

Step 4

Patterns surface

The company sees patterns across teams before escalation.

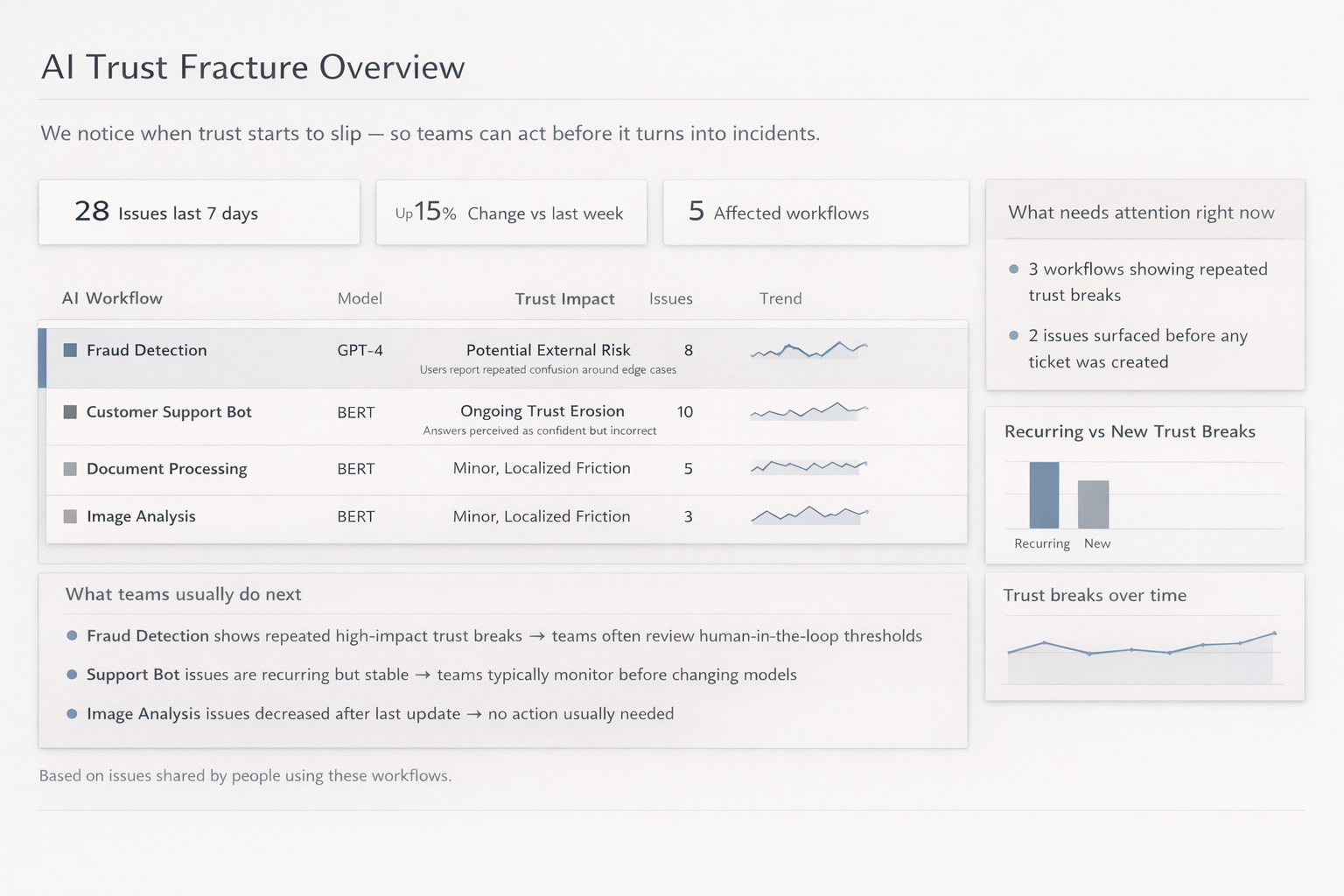

See what’s broken before it becomes costly

Teams don’t just read complaints. They see patterns: which workflows are affected, what’s recurring, and what’s new.

The view stays aggregated, calm, and actionable.

Know what to fix next

- Surface what’s broken before it becomes costly

- Understand which workflows frustrate employees the most

- Prioritize fixes that remove friction fast

- Improve internal AI without burning employee goodwill

Built for companies running internal AI

This product is for companies where employees rely on AI to get work done — not for AI vendors or external model providers.

Typically used by

- Product leaders responsible for AI-driven experiences

- AI / ML teams shipping models into real workflows

- Engineering teams supporting AI-heavy systems

Not designed for

- Isolated experiments

- One-off internal tools

- AI used only for personal productivity

Fix what’s broken before it spreads

For internal teams — not AI vendors. No monitoring. No surveillance.